Archives

Archive for the ‘Tutorials’ Category

April 8, 2014

Creating Your Own Digital Edition Website with Islandora and EMiC

I recently spent some time installing Islandora (Drupal 7 plus a Fedora Commons repository = open-source, best-practices framework for managing institutional collections) as part of my digital dissertation work, with the goal of using the Editing Modernism in Canada (EMiC) digital edition modules (Islandora Critical Edition and Critical Edition Advanced) as a platform for my Infinite Ulysses participatory digital edition.

If you’ve ever thought about creating your own digital edition (or edition collection) website, here’s some of the features using Islandora plus the EMiC-developed critical edition modules offer:

- Upload and OCR your texts!

- Batch ingest of pages of a book or newspaper

- TEI and RDF encoding GUI (incorporating the magic of CWRC-Writer within Drupal)

- Highlight words/phrases of text—or circle/rectangle/draw a line around parts of a facsimile image—and add textual annotations

- Internet Archive reader for your finished edition! (flip-pages animation, autoplay, zoom)

- A Fedora Commons repository managing your digital objects

If you’d like more information about this digital edition platform or tips on installing it yourself, you can read the full post on my LiteratureGeek.com blog here.

November 23, 2011

Call for Papers: Public Poetics: Critical Issues in Canadian Poetry and Poetics

Mount Allison University, Sackville NB, 20–23 September 2012

Poetic discourse in Canada has always been changing to assert poetry’s relevance to the public sphere. While some poets and critics have sought to shift poetic subjects in Canada to make political incursions into public discourses, others have sought changes in poetic form as a means to encourage wider public engagement. If earlier conversations about poetics in Canadian letters, such as those in the well-known Toronto Globe column “At the Mermaid Inn” (1892-93), sought to identify an emerging cultural nationalism in their references to Canadian writing, in the twentieth century poetics became increasingly focused on a wider public, with little magazines, radio, and television offering new spaces in which to consider Canadian cultural production. In more recent decades, many diverse conversations about poetics in Canada have begun to emanate from hyperspace, where reviews, interviews, Youtube/Vimeo clips, publisher/author websites, and blogs have increased the “visibility” of poetry and poetics.

Acknowledging the work that emerged from the 2005 “Poetics & Public Culture in Canada Conference,” as well as recent publications considering publics in the Canadian context, we are interested in examining a growing set of questions surrounding these and other discursive shifts connected with Canadian poetry and poetics. How have technological innovations such as radio, television, and the Internet, for example, made poetry and poetics more accessible or democratic? How does poetry inhabit other genres and media in order to gesture toward conversations relevant to political, cultural, and historical moments? What contemporary concerns energize those studying historical poetries and poetics? How do commentators in public and academic circles construct a space for poetry to inhabit?

The conference sets out to explore the changing shapes of and responses to poetic genres, aesthetic theories, and political visions from a diverse range of cultural and historical contexts. In the interest of reinvigorating conversations about the multiple configurations of poetics, poetry, and the public in Canada, we invite proposals for papers (15–20 minutes) on subjects including, but not limited to:

–Public statements/declarations of poetics

–Publics and counterpublics in Canadian poetry

–The politics of public poetics

–Tensions between avant- and rear-garde poetics in Canada

–Shifting technological modes of poetic and critical production (print/sound/video/born-digital)

–Poetics of/as Activism

–Public Intellectualism and Poetics

–Recovery and remediation of Canadian poetry and poetics

–Poetics and collaboration in Canada

–People’s poetry and /or the People’s Poetry Awards

–Poetry and environmental publics in Canada

Proposals should be no more than 250 words and should be accompanied by a 100-word abstract and a 50-word biographical note. Please send proposals to publicpoetics@mta.ca by 29 February 2012. For more information visit www.publicpoetics.ca.

In conjunction with the conference, a one-day workshop will be hosted by The Canadian Writing Research Collaboratory / Le Collaboratoire scientifique des écrits du Canada. This purpose of this workshop (CWRCshop) is to introduce, in accessible and inviting ways, digital tools to humanities scholars and to encourage digital humanists, via a turn to close reading, to connect with the raw material, which is the basis of digitization efforts.

The PUBLIC POETICS conference is organized by Bart Vautour (Mt. A), Erin Wunker (Dal), Travis V. Mason (Dal), and Christl Verduyn (Mt. A). The conference is sponsored by the Centre for Canadian Studies at Mount Allison University, the Canadian Studies Programme at Dalhousie University, and The Canadian Writing Research Collaboratory / Le Collaboratoire scientifique des écrits du Canada. We plan to publish a selection of revised/expanded papers as a special journal issue and/or a book with a university press.

February 17, 2011

How to Post a Blog to the EMiC Community

Have you been enjoying reading the EMiC Community blog? Do you want to post something of your own but are unsure of how to do so? This post will walk you step-by-step through the process of posting your first blog to the EMiC Community space.

When you visit any page on the EMiC Website, you’ll see a link to “Log in” at the top right of the page.

Click on this link you’ll be a taken to a page where you can enter your login details, which you will have received when you signed up as a member on the site. If you’ve forgotten your password, click on the “Lost your password?” link below the log in box.

Once you’re logged in, you’ll be taken to the user dashboard.

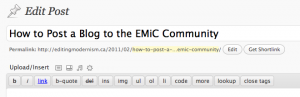

To post a blog entry, click on “New post” on the top right of your screen (or “Add new” under “Posts” on the left navigation bar). You’ll be redirected to the post entry page.

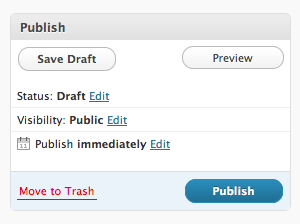

On the new post screen you’ll have the option (on the right) to choose to view a preview, save a draft, or publish your post. Your revision history will be listed at the bottom of the page. Your post won’t be visible on the public site until you click “Publish”.

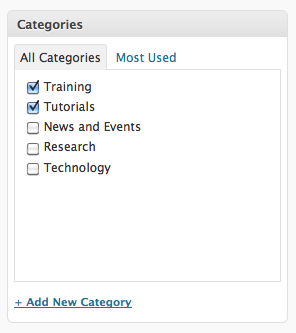

You will also have the option to create categories and tags for your post. You can choose to place your post in the following categories: Training, Tutorials, News and Events, Research, and/or Technology.

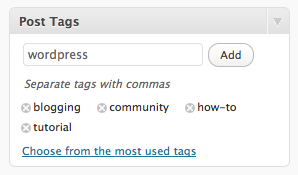

You may also wish to create tags (or keywords) for your post. You can do this in the “Post Tags” box.

When you preview or view a post, you might find that you need to return to the editing screen. Any time you want to get back to the WordPress Dashboard when you’re logged in, you can click the link at the top right of your screen.

It’s really as easy as that! We encourage all of our members to use the blog as a forum for public dissemination of knowledge about all EMiC-related research and training. Your blog posts are a crucial means of communicating with project participants, partners, and the general public. We want to hear from you!!

Still have questions? Email me at mbtimney@uvic.ca.

September 22, 2010

Archiving for Our Times: A Year as a Digital Intern

In September of 2009, I was in my final year as an undergraduate student of English and beginning a digital internship with EMiC. Being a part of EMiC was at first a bit unnerving, and I sometimes felt like a lamb in a den of lions. My task was to scan the many collections of Dorothy Livesay’s poems, and later I had to seek out and scan individual poems as first published in journals and magazines. As I meekly turned pages and fiddled with focus knobs, I dared not devour her works, but instead handled them carefully and reverentially, grazing library shelves for the next poem. The hours in the scanning room provided tedium like a short fast, starving and renewing the system, and the materiality of each text was fascinating, as we encountered errors in page numbering and other quirks. I had had a formal introduction to Livesay’s work in a seminar class during which I was very interested in her politics, but it was while touching the books, reading them and contemplating them on my own, that I gradually got to know her as a woman and as a poet. I absorbed much when, unable to help myself, I quickly read each poem as it was laid out under the lens, or when I paused to read a stanza while editing, and I got a better feel for her recurring images and themes, for the absolute beauty of her words. While I learned much about scanning and editing, it was this availability of Livesay’s body of works that was the truly valuable education to me.

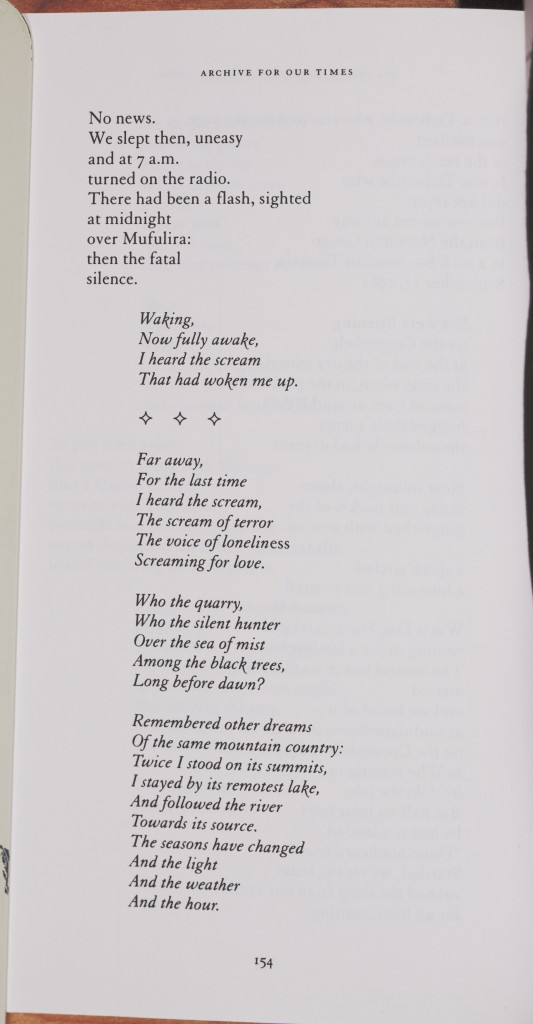

Nevertheless, I felt like a real digital intern as I learned the value of Photoshop in the task of presenting Livesay’s work to a digital audience. I found myself contemplating how to satisfactorily record the material and artistic history of an era of terrifying newness when the pieces of this time have become battered and bent, musty and old, and the scanner we use is finicky and seems itself to be caught in some mechanical process of decay. The technology of our times and the act of digitizing can help recover the sense of newness that inspired and pushed poets like Dorothy Livesay to write, but it takes a bit of doing. The problem in Photoshop became less about how simply to straighten an image, and more about how much of the image can be recreated to look more like reality than the scanner made possible. As it happens, book scans often turn out like this, where it had been impossible to flatten the page without damaging the book:

(Poem: “The Hammer and the Shield”)

You thus learn as you go, resolving issues and learning how to fill in the gaps between image-as-it-is and image-as-it-should-be. The following provides a number of tools in Photoshop that can be used for editing scanned book pages like the one above. I hope it proves useful.

Before editing anything, hit Save As and change the file name to indicate that it is an edited file (this way you never accidentally lose the original scan). Then straighten the image so that most of the text is straight (Image: Image Rotation: Arbitrary: 0.25% is very useful here). Grid lines also help you get the straightening exact (View: Show: Grid). Then crop the image and fill in the edges using the Brush tool (use the Eyedropper tool to pick up the background colour first); both tools are in the Tools area on the left. You should by then have something like this (showing grid lines).

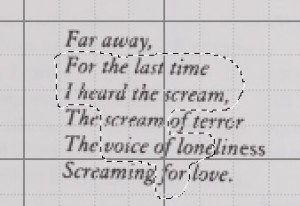

The crooked text needs to be rotated separately from the rest of the image. To do this, 1) use the Marquee tool or the Lasso tool to get around the text or between the lines (both tools are in Tools on the left). 2) When you have the portion of text that needs to be rotated, right-click and hit “Layer via Copy.” This copies the selected portion of the image into a layer, which is a piece of image that can be fiddled with separately from the background image or other layers. (Ensure, especially when you’ve created several layers, that the Background layer is selected when you click “Layer via Copy.”)

(A demonstration of the Lasso tool in action; so useful when the project requires a fussy amount of detail)

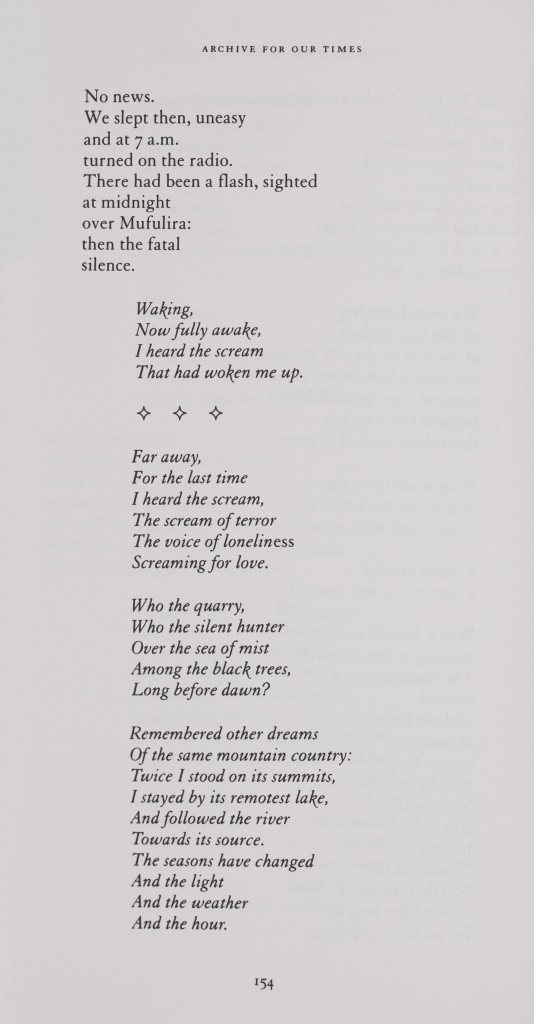

3) Go to Edit: Transform: Rotate, and mouse over the corner of the text until you see a curvy arrow. Click, hold, and move the mouse to rotate the text until it is straight. At this stage you can also use the arrow keys on your keyboard to move the layer slightly in different directions to line it up properly.

When happy with the image, go to Layer: Flatten Image. (You may want to do this periodically if you find yourself creating many layers, especially for an image like the one above). This lessens the file size considerably, and the file size is already going to be ginormous. At this point, change the image size to whatever is needed. Then check the extension on the file name at the top (to make sure you did a Save As at the beginning of your work on this page) before hitting Save. Hopefully you will get something like this (or better):

July 12, 2010

TEI @ Oxford Summer School: Intro to TEI

Thanks to the EMiC project, I am very fortunate to be at the TEI @ Oxford Summer School for the next three days, under the tutelage of TEI gurus including Lou Burnard, James Cummings, Sebastian Rahtz, and C. M. Sperberg-McQueen. While I’m here, I’ll be providing an overview of the course via the blog. The slides for the workshop are available on the TEI @ Oxford Summer School Website.

In the morning, we were welcomed to the workshop by Lou Burnard, who is clearly incredibly passionate about the Text Encoding Initiative, and is a joy to listen to. He started us off with a brief introduction to TEI and its development from 1987 through to the present (his presentation material is available here). In particular, he discussed the relevance to the TEI to digital humanities, and its facilitation of the interchange, integration, and preservation of resources (between people and machines and between different media types in different technical contexts). He argues that the TEI makes good “business sense” for the following reasons:

As a learning exercise, we will be encoding for the Imaginary Punch Project, working through an issue of Punch magazine from 1914. We’ll be marking up both texts and images over the course of the 3-day workshop.

After Lou’s comprehensive summary of some of the most important aspects of TEI, we moved into the first of the day’s exercises: an introduction to oXygen. While I’m already quite familiar with the software, it is always nice to have a refresher, and to observe different encoding workflows. For example, when I encode a line of poetry, I almost always just highlight the line, press cmd-e, and then type a lower case “L”. It’s a quick and dirty way to breeze through the tedious task of marking-up lines. In our exercise, we were asked to use the “split element” feature (Document –> XML Refactoring/Split Element). While I still find my way more efficient for me, the latter also works quite nicely, especially if you’re using the shortcut key (visible when you select XML Refactoring in the menu bar).

Customizing the TEI

In the second half of the morning session, Sebastian provided an explanation of the TEI guidelines and showed us how to create and customize schemas using the ROMA tool (see his presentation materials). Sebastian explained that TEI encoding schemes consist of a number of modules, and each module contains element specifications. See the WC3 school’s definition of an XML element.

How to Use the TEI Guidelines

You can view any of these element specifications in the TEI Guidelines under “Appendix C: Elements“. The guidelines are very helpful once you know your way around them. Let’s look at the the TEI element, <author>, as an example. If you look at the specification for <author>, you will see a table with a number of different headers, including:

<author>

the name of and description of the element

Module

lists in which modules the element is located

Used By

notes the parent element(s) in which you will find <author>, such as in <analytic>:

<analytic>

<author>Chesnutt, David</author>

<title>Historical Editions in the States</title>

</analytic>

May contain

lists the child element(s) for <author>, such as “persName”:

<author persName=”Elizabeth Smart”>Elizabeth Smart</author>

Declaration

A list of classes to which the element belongs (see below for a description of classes).

Example and Notes

Shows some accepted uses of the element in TEI and any pertinent notes on the element. On the bottom right-hand side of the Example box, you can click “show all” to see every example of the use of <author> in the guidelines. This can be particularly useful if you’re trying to decide whether or not to use a particular element.

—

TEI Modules

Elements are contained within modules. The standard modules include TEI, header, and core. You create a schema by selecting various modules that are suited to your purpose, using the ODD (One Document Does it all) source format. You can also customize modules by adding and removing elements. For EMiC, we will employ a customized—and standardized—schema, so you won’t have to worry too much about generating your own, but we will welcome suggestions during the process. If you’re interested in the inner workings of the TEI schema, I recommend playing around with the customization builder, ROMA. I won’t provide a tutorial here, but please email me if you have any questions.

TEI Classes

Sebastian also covered the TEI Class System. For a good explanation what is meant by a “class”, see this helpful tutorial on programming classes (from Oracle), as well as Sebastian’s presentation notes. The TEI contains over 500 elements, which fall into two categories of classes: Attributes and Models. The most important class is att.global, which includes the following elements, among others:

@xml:id

@xml:lang

@n

@rend

All new elements are members of att.global by default. In the Model class, elements can appear in the same place, and are often semantically related (for example, model.pPart class comprises elements that appear within paragraphs, and the model.pLike class comprises elements that “behave like” paragraphs).

We ended with an exercise on creating a customized schema. In the afternoon, I attended a session on Document Modelling and Analysis.

If you’re interested in learning more about TEI, you should also check out the TEI by Example project.

Please email me or post to the comments if you have any questions.

June 17, 2010

Summary of EMiC Lunch Meeting, June 11, 2010

On the final day of DHSI, EMiC participants gathered for an informal meeting to discuss their summer institute experiences and to plan for the upcoming year. Dean and Mila attended via Skype (despite some technical difficulties). We began by going around the table and talking about our week at DHSI. We reviewed the courses that we took, discussed our the most helpful aspects, and least. The participants who had taken the TEI FUNdamentals course agreed that the first few days were incredibly useful, but that the latter half of the course wasn’t necessarily applicable to their particular projects. Dean made the comment that we should pick the moments when we pay attention, and work on our material as much as possible. Anouk noted the feeling of achievement (problem-solving feedback loop), and her excitement at the geographical scope involved in mapping social networks and collaborative relationships as well as standard geographical locations. It sounds like everyone learned a lot!

We also agreed that there is a definite benefit in taking a course that also has participants who are not affiliated with EMiC; the expertise and perspective that they bring to the table is invaluable.

After our course summaries, we began to think about the directions in which we want to take EMiC. We discussed the following:

1. The possibility of an EMiC-driven course at DHSI next year, which I will be teaching in consultation with Dean and Zailig

a. The course will likely be called “Digital Editions.”

b. It will be available to all participants at the DHSI, but priority registration will be given to EMiC partipants.

c. It will include both theoretical and practical training in the creation of digital editions (primarily using the Image Markup Tool), but also including web design and interface models.

d. We will develop the curriculum based on EMiC participants’ needs (more on this below).

2. Continued Community-Building

The courses provided us with ideas of what we want to do as editors, and allowed us to see connections between projects. The question that followed was how we will work with one another, and how we sustain discussion.

a. We agreed that in relation to the community, how we work together and what our roles are is very important, especially as they related to encoding and archival practice.

b. We discussed how we would continue to use the blog after we parted ways at the end of the DHSI. Emily suggested that we develop a formalized rotational schedule that will allow EMiC participants at different institutions to discuss their work and research. We agreed that we should post calls for papers and events, workshop our papers, and use the commenting function as a means of keeping the discussion going. (Other ideas are welcome!)

c. We discussed other ways to solidify the EMiC community, and agreed that we should set up EMiC meetings at the different conferences throughout the year (MSA, Congress, Conference on Editorial Problems, etc).

3. What’s next?

a. For those of you who are interested in learning more about text encoding, I encourage you to visit the following sites:

• WWP Brown University: http://www.wwp.brown.edu/encoding/resources.html

• Doug Reside’s XML TEI tutorial: http://mith2.umd.edu/staff/dreside/week2.html

b. We are hoping that there will be an XSLT course at the DHSI next year.

c. Next year’s EMiC Summer Institute line-up will include 3 courses: TEMiC theory, TEMiC practice, and DEMiC practice.

d. Most importantly, we determined that we need to create a list of criteria: what we need as editors of Canadian modernist texts. Dean requested that everyone blog about next year’s EMiC-driven DHSI future course. Please take 15-20 minutes to write down your desiderata. If you can, please come up with something of broad enough appeal that isn’t limited to EMiC. (Shout out to Melissa for posting this already!)

As a side note, I spoke with Cara and she told me that there is indeed going to be a grad colloquium next year, which will take place on Tuesday, Wednesday, and Thursday afternoon during the DHSI. Look for a call for papers at the end of summer.

It was lovely to meet everyone, and I am looking forward to seeing you all soon!

**Please post to the comments anything I’ve missed. kthxbai.

June 11, 2010

IMTweet

#emicClouds

A little DHSI playtime for you. First, two word clouds: one of the DHSI Twitter feed, the other of the EMiC Twitter feed. Both feeds were collected using the JiTR webscraper, a beta tool in development by Geoffrey Rockwell at the University of Alberta.

How did I do this? First I scraped the text from the Twapper Keeper #dhsi2010 and #emic archives into JiTR. I did this because I wanted to clean it up a bit, take out some of the irrelevant header and footer text. Because JiTR allows you to clean up the text (which is not an option in the Twapper Keeper export) you don’t have to work with messy bits that you don’t want to analyze. After that I saved my clean texts and generated what are called “reports.” The report feature creates a permanent URL that you can then paste into various TAPoRware tools. I ran the reports of the #dhsi2010 and #emic feeds through two TAPoRware text-analysis tools, Voyeur and Word Cloud.

If you want to generate these word clouds and interact with them, paste the report URLs I generated using JiTR into the TAPoR Word Cloud tool.

June 9, 2010

A Voyeur’s Peep] Tweet

To build on Stéfan Sinclair’s plenary talk at DHSI yesterday afternoon, I thought it appropriate to put Voyeur into action with some born-digital EMiC content. Perhaps one day someone will think to produce a critical edition of EMiC’s Twitter feed, but in the meantime, I’ve used a couple basic digital tools to show you how you can take ready-made text from online sources and plug it into a text-analysis and visualization tool such as Voyeur.

I started with a tool called Twapper Keeper, which is a Twitter #hashtag archive. When we were prototyping the EMiC community last summer and thinking about how to integrate Twitter into the new website, Anouk had the foresight to set up a Twapper Keeper hashtag archive (also, for some reason, called a notebook) for #emic. From the #emic hashtag notebook at the Twapper Keeper site, you can simply share the archive with people who follow you on Twitter or Facebook, or you can download it and plug the dataset into any number of text-analysis and visualization tools. (If you want to try this out yourself, you’ll need to set up a Twitter account, since the site will send you a tweet with a link to your downloaded hashtag archive.) Since Stéfan just demoed Voyeur at DHSI, I thought I’d use it to generate some EMiC-oriented text-analysis and visualization data. If you want to play with Voyeur on your own, I’ve saved the #emic Twitter feed corpus (which is a DH jargon for a dataset, or more simply, a collection of documents) that I uploaded to Voyeur. I limited the dates of the data I exported to the period from June 5th to early in the day on June 9th, so the corpus represents the #emic feed during the first few days of DHSI. Here’s a screenshot of the tool displaying Twitter users who have included the #emic hashtag:

As a static image, it may be difficult to tell exactly what you’re looking at and what it means. Voyeur allows you to perform a fair number of manipulations (selecting keywords, using stop word lists) so that you can isolate the information about word frequencies within a single document (as in this instance) or a whole range of documents. As a simple data visualization, the graph displays the relative frequency of the occurrence of Twitter usernames of EMiCites who are attending DHSI and who have posted at least one tweet using the #emic hashtag. To isolate this information I created a favourites list of EMiC tweeters from the full list of words in the #emic Twitter feed. If you wanted to compare the relative frequency of the words “emic” and “xml” and “tei” and “bunnies,” you’d could either enter these words (separated by commas) into the search field in the Words in the Entire Corpus pane or manually select these words by scrolling through all 25 pages. (It’s up to you, but I know which option I’d choose.) Select these words and click the heart+ icon to add them to your favourites list. Then make sure you select them in the Words within the Document pane to generate a graph of their relative frequency. If want to see the surrounding context of the words you’ve chosen, you can expand the snippet view of each instance in the Keywords in Context pane.

Go give it a try. The tool’s utility is best assessed by actually playing around with it yourself. If you’re still feeling uncertain about how to use the tool, you can watch Stéfan run through a short video demo.

While you’re at it, can you think of any ways in which we might implement a tool such as Voyeur for the purposes of text analysis of EMiC digital edtions? What kinds of text-analysis and visualization tools do you want to see integrated into EMiC editions? If you come across something you really find useful, please let me know (dean.irvine@dal.ca). Or, better, blog it!

June 7, 2010

The First Meeting

It is finally here! The bunnies are hopping, the sky is grey, and the sleep-deprived, jet lagged EMiC contingent finally comes together.

After a bit of a rough start with no A/V and an unexplained lost pizza order, when I arrive on scene forty minutes before the meeting, my confidence is slightly shaken. I dig through my backpack for my “backup” laptop and USB key, and I interrupt a lady in a chef’s hat.

“Excuse me, but do you know if this room is equipped with A/V?”

Blank stare.

“I apologize, but do you know where I could set up a power point presentation?”

Blink, blink.

“I need to use a computer for this meeting and I need a screen to project the image on. Do you have any idea who I might contact?”

Mouth starts to fall open.

“Can you tell me where our pizza is?”

“OH! It’ll be here just after seven. Sorry about that!”

It’s all good. I can work with this.

I survey the room, note the large amount of M&M cookies, and I breathe a sigh of relief. People like cookies. And, these tables move. We are good to go. When Zailig arrives, the number of laptops double. Megan comes, and now we have three. From this point forward, it is smooth sailing.

As the participants trickle in, my heart is filled with joy. They are here, they are happy, and everyone has a bed to sleep in. My work here (in this regard) is complete.

As we set up “viewing stations” and plug in the presentation on each laptop, I realize that this is a far more communal experience than watching a powerpoint on the big screen. People huddle together, talking and laughing as the set-up process takes place. Happy visions of networking and collaboration are made tangible.

Zailig brings the meeting to order after intros have taken place. He clarifies he is reading Dean’s paper, and then goes straight into it. Dean’s personal anecdotal remarks are not altered. Zailig uses Dean’s personal pronoun, much to my delight. As he regales us as “Dean”, I remember listening to this talk two weeks ago. Then, I flash back to meeting Dean for the first time at DHSI last year.

What an impressive learning curve this project has experienced in the last year. If it wasn’t clear before, it is definitely clear now: Dean is a super-human. I can’t believe all the work (and heart) he, Megan, Zailig, Vanessa (… and all of you!) have put into bringing Editing Modernism in Canada into its second year, and its second DEMiC. It is such an exciting time.

Though I have plenty more I could say, I just want to express my delight that you are all here. It is going to be a great week, and I look forward to visiting with all the DEMiC participants throughout the week.

Goodnight, friends. I can’t wait to see what tomorrow brings.