Archives

Author Archive

June 3, 2014

“A Collaborative Approach to XSLT” and a Riddle

I just finished my second day in the EMiC-sponsored course “A Collaborative Approach to XSLT.” This is a new offering at DHSI, and it is fairly original in its design—it is team-taught by a father and son duo, Zailig and Josh Pollock. This pedagogical model—with Zailig as the digital humanist, and Josh as the programmer—works really well for the course, which stresses the importance of successful collaboration. The premise of the course is that “few digital humanists have the time or inclination to master the complex details of [TEI] implementation,” and that instead, what a digital humanist should learn is enough basic vocabulary and skills so that they can communicate with a programmer. This course, therefore, practices what it preaches, with Zailig providing concrete examples in which a scholar might use a complex piece of code and with Josh able to effectively answer all of the more technical questions.

Although it is still early in the week, I can confidently state that this is one of the best courses I have taken at DHSI/DEMiC. Zailig and Josh have clearly spent a long time designing and thinking about the best way to teach this course. The course’s structure, so far, has been that a concept is explained followed by a small exercise where the students have to put this concept into practice. Rinse and repeat. After two days, we have run through twelve exercises, teaching (or refreshing) students about: XML, HTML, CSS, XSLT, and XPaths. Although we have covered a large amount of material in two days is large, the method of periodically stopping and practice each concept as we proceed has ensured that everyone understands and is on track.

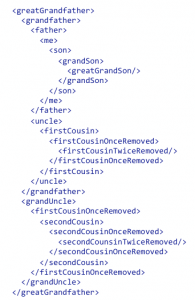

Josh successfully demonstrated how far we have come as a class when late Tuesday afternoon he posted a riddle, and demonstrated how we could solve it using XPaths. For the curious, the riddle is: “Brothers and sisters have I none. This man’s father is my father’s son.” To solve this riddle, you simply need an .xml file and some Xpaths.

count(//me/preceding-sibling::* | //me/following-sibling::*)=0

[Result: true]

//*[.. = //me/../*]

[Result: son]

March 4, 2014

The Digital Page: Brazilian Journal

I began working with the Brazilian Journal four years ago, when Suzanne Bailey was working on a print-based critical edition of the text for Porcupine’s Quill. Brazilian Journal began as a diary kept by Canadian poet P.K. Page, from 1957-59, while she accompanied her husband to Brazil, where he was serving as Canada’s ambassador. When Suzanne and I began working on the text we were under the assumption that the only existing manuscript was Page’s original diary pages, now stored at the LAC. This situation simplified the editorial task, as we chose the first print edition of the text, published by Lester & Orpen Dennys on June 27, 1987, as our copytext.

Shortly before our new edition went to print, however, we discovered eight heavily annotated typescripts of the Brazilian Journal that showed a very clear evolution of the text from Page’s diary in the late 1950s to the polished text published in 1987. While vastly informative for literary critics studying both the text and Page’s work as a whole, these typescripts presented us with thousands of pages of new material. There was no easy way to present this genesis of the text, with its thousands of variants, in a print edition, and still have it be usable as a clear reading text. Zailig Pollock, as editor of the Collected Works of P.K. Page Project is attempting to create digital editions that do both. His solution is to create two separate, but related, publications: a printed edition, and an online database. The print editions, published by Porcupine’s Quill, are intended for general readers and students, rather than as primary scholarly resources. The online database, however, will display digital facsimiles of every version of the work—both manuscripts and published. The Brazilian Journal was chosen as the first of Page’s texts to undergo this two-pronged approach to a scholarly edition, and we finally have a working prototype of the digital database—what Zailig calls the Digital Page—to share with the wider EMiC community.

The digital database had to meet several requirements. First, it had to display a facsimile of each manuscript page. Second, it had to provide a clear narrative outlining the genesis of each manuscript page, placing all the changes and corrections in chronological order. Third, it had to present a clean reading text. The current prototype does all three of these things. Zailig has written a very useful guide to navigating the database’s interface [click here to read guide].

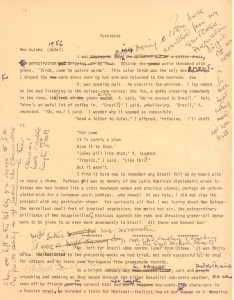

I thought it would be interesting to quickly show some of the work that went into building this prototype. Thanks to the help of some excellent RAs, we began by scanning all of the eight typescripts at a high-resolution to ensure that they would be readable online. (Each typescript is over 300 pages, so this was not a quick task.) After we had each typescript scanned, we began transcribing each page. We used OCR to help when we could, but often Page’s corrections and marginalia made this impossible. This is the first page of the first manuscript. While it is more heavily marked up than the average page, it does show what we were working with. We have identified five unique “editing sessions” on this page alone.

A session or ‘campaign’ as it is sometimes called represents a self-contained set of revisions. That is, all the revisions identified as being from a single session follow all of the revisions from earlier sessions and precede all of the revisions in following sessions. Within a session the chronological relationship amongst various sets of revisions is usually not obvious. There is no reason, for example to assume that revisions made on the first page precede ones on the last page. In contrast a revision in Session A to the past page of the document will precede all revisions in Session B, including those on the first page. The teasing out of the different sessions is base partly on writing material—pencil or ink of various colours or felt pen, in the case of Brazilian Journal—but other internal, and external evidence can come into play. The digital edition will include a discussion of the sessions and our determination of them.

We have identified six different sessions in the first manuscript. The five shown on the first page are:

A – typescript

B – felt pen

C – blue ink

D – pencil

E – black ink

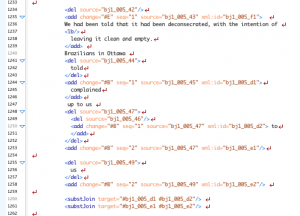

After transcribing each page, we had to code each page using TEI markup. Zailig developed a very specific set of tags, all of which are TEI compliant, to mark all variants in the text. Alongside this tag-set, we also tagged each “revision site” to its corresponding location on the manuscript page. By doing this, we have linked all revisions in the transcription with the manuscript page itself. This ensures that it is very easy for a reader to understand where a change takes place. (If you look at the prototype, you can see this linking in practice.) A sample of our coding for this first page looks like this:

After coding each page, we run the XML file through an XSLT program designed by Zailig’s son Josh, which outputs the HTML that you can see in the prototype. (If you are interested in learning about XSLT, you should check out the EMiC-sponsored DHSI course being taught this year by Zailig and Josh—“A Collaborative Approach to XSLT.”)

This is a lengthy-process, with the coding of each page taking at least thirty minutes—a page like the one above takes much more. As a result, it will take years to finish this project. But we are aware of this limitation, and have designed the digital database to accommodate this. We currently envision the Digital Page website to post new material as it becomes available. Thus, we will post the scans of each manuscript first. Then we will post the transcriptions, and finally, the coded pages. This will allow scholars to have access to the material before the digital edition is complete.

The prototype is still being in its beta phase. Zailig and I are constantly tweaking the coding when we encounter new problems that we need to solve. Josh is constantly adjusting the XSLT to ensure that the resulting HTML performs as it should. There are two limitations to the interface as it currently stands which we will be working on in future. The first is that currently the user can only move from any revision in the typescript to the image. Josh is currently writing code that will allow the user to move in the other direction, from any revision point in the image to the equivalent point in the transcription. The user will also be able to see at a glance the sequence of the revisions through the various, colour-code sessions. The next step, which is farther down the road, but which does not seem to present any insuperable difficulties, is to allow the user to follow the genesis through all of the relevant documents

If you have any comments or suggestions on our prototype, we would welcome them!

May 13, 2011

What I learned as a Research Assistant…

For the last year, I have been an EMiC-funded research assistant on a number of the P.K. Page projects been worked on at Trent University. Primarily, I have been working on Page’s Brazilian Journal, edited by Suzanne Bailey—due out in print in the fall of 2011, published by Porcupine’s Quill—as well as Page’s Mexican Journal, edited by Margaret Steffler. My responsibilities have included: transcribing typescript manuscripts into a digital file, proofreading, fact-checking, and creating annotations. Now that the Brazilian Journal is in the hands of the publisher, I thought I’d share some of the knowledge I have gained over the last year.

The joys and problems of manuscripts! For the Brazilian Journal, we were lucky to find a copy of the manuscript in LAC. The manuscript was a typescript—most likely the actual journal Page kept while in Brazil (1957-1959). This journal was then later edited by Page, and published in 1987 by Lester & Orphen Dennys. Therefore, with this typescript manuscript, we were able to identify what changes—alterations, deletions, re-wording—that Page had made to the journal before it was originally published. This was useful for a number of reasons. Primarily, it gave insight into Page’s creative process. By looking at what passages Page deleted and speculating on the reasons for the deletions—was she censoring herself, removing private issues, or trying to prevent herself from embarrassing friends—it is possible to see how Page wrote for herself, as well as how she wanted to be seen by the public. As the copy-text for the new edition of the Brazilian Journal is the 1987 published version, and not the manuscript, few of the changes between the two text will be mentioned in the new edition. These changes, however, will all be made available when the digital edition of Page’s work goes live. While one version of the manuscript was incredibly useful, recently a number of other copies have been discovered. Some of these manuscripts have marginalia, and other notes, which will be incredibly useful. The numerous manuscripts also present potential problems—trying to organize them chronologically, discover when they were written, for what purpose they were created, etc. Thankfully the new print version of the text did not use the manuscript as a copy text, so these problems can be addressed between now and when the digital edition goes live.

Publisher and editor relationship. While my studies are in book history, and I have read numerous accounts of how the relationship between publisher and author can affect the final appearance of a text, it is still interesting (frustrating?) to experience these discussions first-hand. Due to a limitation on page count, numerous discussions were had over what to include in the final text, and what to exclude. How long should the index be? Are all the terms in the index necessary? Can we make the annotations shorter, without losing valuable information? Etc. This experience has only made me question the editorial practice of every book I read even more—what was excluded? Why? On whose request? Was it for financial reasons? Practical reasons? I strongly believe that this sort of critical approach to any critical edition is both appropriate and responsible. Yet, it took being involved in these types of discussions myself before this opinion became solidified.

Creating annotations is an incredibly humbling experience. When I first started making annotations, I naïvely assumed that I would know most of the things that needed annotation, and would be able to write annotations for them easily. Once I began, however, I quickly realized that I was dead wrong. As I began reading the text extremely closely, underlining anything that I did not understand, or that I thought others wouldn’t understand, I quickly developed a very long list, with most of the entries coming from the former. I started to worry—clearly I am an ignorant fool, if there are so many allusions and references that I don’t understand. After a few moments of self-deprivation, I decided that the only way to continue was to assume that I wasn’t a ignorant fool, but that Page was brilliant—therefore, including references that the average joe would not recognize. As well, the journal was written in the late 50s, thirty-years before I was born. After accepting my ignorance, the job of annotating actually became thrilling, and incredibly rewarding. Tracking down obscure references was fun, and often illuminating. I quickly discovered that I was right about Page—she was brilliant. While writing her journal in Brazil, surely without a large library of texts, she is able to quote from a vast array of sources, presumably all from memory. Even with the help of Google, tracking down some of these sources was difficult, and yet Page knew them by heart. Despite having read the journal half-a-dozen times by this point, I learned more about Brazil through creating the annotations than I did by reading the journal. I also learned a lot about Page, and also about how I read (apparently I often skip allusions and references I don’t understand). And most importantly, I gained a new appreciation for annotations found in critical editions that are done well, and an even greater disdain for ones that are done poorly.

I wanted to share one example of how rewarding and important annotations can be to a critical edition of a text. In the Brazilian Journal, Page writes of “the Ricketts-blue bay” (128). It took me a while to track down what Page was referring to, despite the fact that I knew it had to be something blue, when eventually, I stumbled across these images–image 1, image 2. As soon as I saw these images, I knew I had discovered the proper allusion—and that feeling of epiphany is reward in itself. I was then able to write my annotation: “Reckitt’s Blue was an early laundry whitener, manufactured by Reckitt & Sons in Hull, England. The product was a dark, rich blue in colour.” I also quickly realized that the text contained a typo, it should read “Rickitt’s” and not “Ricketts.” The work on this annotation forced us to create an emendation to the text—of which they are not many.

In conclusion, my job as a research assistant has been fun and challenging, but most importantly, it has given me a “hands-on” education that is invaluable.

June 23, 2010

My DHSI project and experience

Now that I’ve finally made it home from DHSI, and had a chance to process the week, I thought I’d share what I was working on in my course, and my thoughts that came out of it.

I was in the TEI fundamentals course, and as I am not currently working on my own EMiC project but working as an R.A. for Zailig on the PK Page project, I decided to mark-up a text that interests me. I ended up choosing the opening page to Douglas Coupland’s seminal novel Generation X.

I chose this text for a number of reasons. First, it is one of the main texts for my thesis, which means that I had it with me. But secondly, and more importantly, I chose it because I wanted to figure out how to encode the interesting and unusual non-textual elements on the page. Primarily, the fact that the second paragraph break (line 10) isn’t represented by a line break followed by an indentation. Instead, it is simply notated with the symbol for a new paragraph (¶). (This only happens on the first page of every new chapter, not throughout the whole text). Secondly, I was interested in the photo that is inset into the text, breaking up the text, and even causing a soft-hyphen on the word “transportation.” To me, these two features are essential to the text.

After my DHSI course, however, this is the coding that resulted for this introductory paragraph.

During my course, I asked the professors how I could go about coding these two non-textual elements. The short answer was “You can’t.” TEI is only meant to encode the text itself, and the professors explained that these elements were not part of the text, and therefore had no reason to be encoded. When I pushed them, they suggested that I employ the ‘rend’ attribute to solve my first problem—that of the ¶ symbol. As you will notice in my image, the line that is highlighted contains a new paragraph, with the rend attributes “¶,” “notes-new-paragraph,” and “no line break”. You will also notice that the ¶ symbol does not appear in the text itself, but is simply hidden away in the code. Their argument was that this symbol clearly indicates a new paragraph, and nothing more. While I strongly disagree, I left it as they suggested.

As for the second problem, the professors suggested that I simply pretend that the image doesn’t exist, and remove the soft-hypen from the text. I could always link to a scan of the page, where avid readers could discover the image inset into the text on their own. As you can see from my coding, I decided to leave the soft-hypen in the word “transportation,” but I did not find a satisfactory way to represent that the text was being interrupted by an image.

Although this is a very short text, it taught me a number of lessons about TEI, and about what is currently possible with digitized texts.

1) TEI is, first and foremost, about encoding the ‘text’. While it is possible to encode non-textual elements, there is no agreed upon method. TEI has a very clear hierarchy.

2) Encoding a text in TEI, like all forms of editing, is subjective. It can be deceiving, because there are strict rules on what is and what is not allowed, etc. But in the end, it is not scientific, nor purely objective.

3) Every editor should strive to make his or her editorial method as clear and as transparent as possible. I am not convinced that TEI encoding explicitly allows for this.

4) A good IMT is definitely needed to support the design and layout issues that are not supported by TEI encoding alone. Although I am wary of simply telling readers that everything that isn’t in the TEI encoding can be found in an accompanying marked-up image, it is better than just a TEI encoded text.

So there are my few thoughts. I am anxious to see other EMiC projects as they develop, to see if anyone else encounter similar issues, and how they are resolved.

Cheers.

June 8, 2010

Thoughts so far on TEI

After two days of TEI fundamentals, I have come to a few conclusions.

First, the most interesting thing about TEI is not the things that you can do, but the things that you cannot do. TEI is, as far as I understand it, only concerned with the content of the text, ignoring everything else (paratextual elements, marginalia, interesting layout, etc.) – stuff some of us find extremely valuable. On top of this, the coding for variants is messy, complicated, and would be next to impossible for complicated variants. For an example of TEI markup for variants, check out: http://www.wwp.brown.edu/encoding/workshops/uvic2010/presentations/html/advanced_markup_09.xhtml.

As a result, I am glad that EMiC is developing an excellent IMT – this should solve many of the limitations of TEI, at least from my perspective.

Finally, while participants can all learn basic TEI and encoding, the next step of course would be to establish a CSS stylesheet. It seems to me that EMiC, like all publishing houses, should establish a single organization style, and design a stylesheet that all EMiC participants are free to use. This would ensure a consistent design and look of all EMiC orientated projects in their digital form. Maybe this could be something discussed at a roundtable at next-years speculated EMiC orientated course at DHSI.

Something to ponder.